In our guide, Measurement Uncertainty in Force Measurement, we explained the rigorous, standards-based measurement uncertainty calculations that determine load cell precision in controlled lab environments. However, for most commercial applications, this rigorous testing is expensive and unnecessary. Instead, simple proof that the load cell performs within specified tolerances (even for “Legal-for-trade“ weighing) is sufficient.

To establish these performance tolerances, standards bodies have created load cell “classes” or levels of precision. These internationally recognized standards bodies have the backing of trade regulation within and among the countries that send representatives to their conferences. When a manufacturer stamps an NTEP badge on a datasheet, they are proving that a certified laboratory has verified the model’s compliance with these strict performance boundaries.

But when you buy an NTEP-certified load cell, what does its accuracy class actually tell you about its real-world measurement output? This guide breaks down the performance parameters defined by NIST Handbook 44 [1] so you can confidently select the right class for your application.

Key Takeaways

- The article explains load cell classes according to the U.S. Department of Commerce’s National Institute of Standards and Technology (NIST) Handbook 44.

- Load cell classes define the permissible uncertainty limits a device must meet for certification. NIST defines five load cell classes based on application and specifies requirements for their scale divisions and maximum permissible errors.

- In the U.S., the National Type Evaluation Program (NTEP) performs load cell testing to certify them, ensuring they meet specific performance parameters and tolerances for their class.

- To achieve certification, load cells must meet performance standards even when exposed to environmental stressors such as temperature and pressure.

- Regular calibration ensures that certified load cells continue to meet NIST standards and tolerances.

Standards Bodies Governing Load Cells

The National Type Evaluation Program (NTEP) is a testing and certification program administered by the National Conference on Weights and Measures (NCWM). NTEP evaluates weighing devices to ensure they comply with the full set of technical requirements published by the U.S. Department of Commerce’s National Institute of Standards and Technology (NIST) in Handbook 44. Note that this body governs weighing devices for commerce in the United States exclusively, and exists under the authority of Article 1, Section 8 of the U.S. Constitution.

For international standards, our companion article, Load Cell Classes: OIML Requirements, covers the parallel framework set by the International Organization of Legal Metrology (OIML) in Recommendation 60-1 (R60-1) [2]. Together, NIST and OIML define the standards for most load cells worldwide.

Below, we provide a practical summary of Handbook 44 performance parameters and outline how a manufacturer achieves NTEP certification. For the most current, unedited regulations, always refer directly to the full NIST documentation.

Background Concepts in Load Cell Requirements

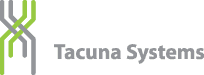

This section reviews basic terminology used in requirements for load cells and measuring systems. Figure 1 depicts them for further clarity.

Load Definitions

The common symbols used to describe loads, and their definitions, appear below.

Term

Symbol

Definition

Minimum Dead Load

\(E_{min}\)

The smallest load (expressed in mass units) that can be applied to a load cell.

Minimum Load of the Measuring Range

\(D_{min}\)

The smallest load (expressed in mass units) applied to the load cell under test, and is required to be within 10% of \(E_{min}\).

Maximum Capacity

\(E_{max}\)

The largest load (expressed in mass units) that can be applied to a load cell, per manufacturer’s specifications. It is usually well below the safe load limit.

Maximum Load of the Measuring Range

\(D_{max}\)

The largest load (expressed in mass units) applied to the load cell under test, and is required to be within 90% of \(E_{max}\)

Safe Load Limit

\(E_{lim}\)

The greatest load (expressed in mass units) that can be applied to the load cell without permanently changing the performance of the load cell.

Measuring Range Definitions

The common expressions for load cell measuring ranges are as follows.

Term

Symbol

Definition

Load Cell Measuring Range

\(D_{max} – D_{min}\)

The range of mass values used for testing.

Maximum Measuring Range

\(E_{max} – E_{min}\)

The maximum range of mass values a load cell can accurately measure.

Verification Interval Definitions

The concept of a verification interval is important to the definition of load cell classes. The common related terms are as follows.

Term

Symbol

Definition

Value of the Scale Division

\(d\)

The smallest unit quantity of weight the measuring device indicator can display. In other words, the scale’s human-perceivable resolution.

Load Cell Verification Interval

\(v\)

The capacity increment of a load cell’s measuring range that is used for testing and certification. This governs each load cell component within the scale.

Minimum Load Cell Verification Interval

\(v_{min}\)

The smallest physical weight increment a load cell can reliably distinguish from background electronic and thermal noise. This is a physical limitation, not a certification limitation.

Verification Scale Interval

\(e\)

The minimum quantity that the scale’s measuring range is divided into for testing and certification. This is the certified resolution for the entire weighing system, not just the load cell component. It must be no more than 10 x \(d\) per NIST Handbook 44 requirements.

Maximum Number of Scale Divisions

\(n_{max}\)

The maximum number of divisions that the maximum measuring range can be divided into for certification. Note \(v\) x \(n_{max}\) = the Maximum Measuring Range.

Other Important Terms

Term

Apportioning Factor

Symbol

\(p_{LC}\)

Definition

A unit-less multiplier applied to a load cell observed error to quantify the portion of that error attributable to the load cell alone.

NIST Load Cell Classes and Their Required Performance

With the common understanding of these key terms, we can define each NIST load cell class and describe the measurement tolerances and precision associated with each.

NIST Classes and Their Commercial Application

NIST assigns five classes to load cells: I, II, III, III L, and IIII based on their applications. (See Table 7a in the Handbook.)

NIST Load Cell Class

Application

I

Precision Laboratory Weighing

II

Laboratory weighing, precious metals and gems, grain test scales

III

All commercial weighing including grain test scales, retail precious metals and semi-precious gem weighing, grain-hopper scales, animal scales, postal scales, laundry scales and on-board vehicle weighing systems up to a 30,000lb capacity

III L

Large capacity commercial scales such as vehicle scales, on-board vehicle weighing systems with a capacity greater than 30,000 lbs, axle load scales, livestock, railway track, crane and hopper scales (other than grain hoppers)

IIII

Weight measurement for highway weight enforcement such as axle load weighers

Scale Divisions (Minimum Resolution) and Full Scale Requirements Per NIST Accuracy Class

For each of these load cell accuracy classes, NIST strictly specifies:

- the number of scale divisions (\(n\)),

- the value of the scale division (\(d\)), and

- the value of the verification scale interval (\(e\)).

The table below summarizes these generically, based on Table 3 from [1]. Note that NIST enforces additional, application-specific values for these parameters depending on the exact commercial weighing environment. Refer to Handbook 44 for these exceptions.

Table 1: Parameters for Accuracy Classes

| Class | Value of Verification Scale Division (e) | Number of Scale Divisions (n) | |

|---|---|---|---|

| Minimum | Maximum | ||

| SI Units | |||

| I | ≥ 1 mg | 50,000 | — |

| II | 1 to 50 mg, inclusive | 100 | 100,000 |

| ≥ 100 mg | 5,000 | 100,000 | |

| III | 0.1 to 2 g, inclusive | 100 | 10,000 |

| ≥ 5 g | 500 | 10,000 | |

| III L | ≥ 2 kg | 2,000 | 10,000 |

| IIII | ≥ 5 g | 100 | 1,200 |

| U.S. Customary Units | |||

| III | 0.0002 lb to 0.005 lb, inclusive | 100 | 10,000 |

| 0.005 oz to 0.125 oz, inclusive | 100 | 10,000 | |

| ≥ 0.01 lb | 500 | 10,000 | |

| ≥ 0.25 oz | 500 | 10,000 | |

| III L | ≥ 5 lb | 2,000 | 10,000 |

| IIII | > 0.01 lb | 100 | 1,200 |

| > 0.25 oz | 100 | 1,200 | |

Maximum Permissible Error Per Load Cell Class

Unlike the OIML framework, NIST provides its primary load cell error table specifically under time-dependence guidelines to quantify permissible errors due to creep and creep recovery.

Table 2 (from Table T.N.4.6 of [1]) outlines these Maximum Permissible Errors (MPE) per load cell class for type evaluation testing only. In the NIST lexicon, “type evaluation” refers to the rigorous, independent laboratory testing a load cell model must undergo to achieve its initial NTEP certification.

NTEP Compliance Note: Type evaluation tolerances apply strictly to the manufacturer’s prototype testing phase. Once a scale is deployed in a real-world commercial setting, it is subject to additional, periodic inspections using maintenance tolerances to ensure it remains legal-for-trade.

Table 2: MPE During Type Evaluation For Each Load Cell Class

| Class | MPE in Load Cell Verification Divisions (v) = pLC × Basic Tolerance | ||

|---|---|---|---|

| pLC × 0.5 v | pLC × 1.0 v | pLC × 1.5 v | |

| I | 0 – 50,000 v | 50,001 – 200,000 v | 200,001 v + |

| II | 0 – 5,000 v | 5,001 – 20,000 v | 20,001 v + |

| III | 0 – 500 v | 501 – 2,000 v | 2,001 v + |

| IIII | 0 – 50 v | 51 – 200 v | 201 v + |

| III L | 0 – 500 v | 501 – 1,000 v | Add 0.5 v to basic tolerance for each additional 500 v (Max 10,000 v) |

In the table, \(v\) represents the load cell verification interval (as we established before), and \(p_{LC}\) is the apportionment factor (the proportion of error attributable to the load cell alone). NIST gives specific apportionment factor values per application as follows:

- \(p_{LC} = 0.7\) for load cells of any class other than III L marked “S” (intended for single load cell applications).

- \(p_{LC} = 1\) for load cells of any class other than III L marked “M” (intended for multiple load cell applications).

- \(p_{LC} = 0.5\) for all Class III L load cells, whether marked M or S.

NIST allows a higher permissible error for individual load cells in multi-cell applications since they bear only a fraction of the total load. This means that they each contribute less error to the system than they would in a solo application. Also, statistically, their random errors tend to cancel each other when their output signals combine at the junction box.

Tolerance Types and Values per Accuracy Class

Beyond the MPE for the component load cells, NIST sets specific, total-weigh-system performance tolerances for each accuracy class. These limits change proportionally with the value of the scale division, \(d\).

NIST splits these tolerances into two distinct categories:

Acceptance Tolerances

Acceptance tolerances isolate baseline device errors (such as hysteresis) under strictly controlled environments. These narrow testing conditions require a specified temperature range, stable barometric pressure, and a consistent power supply.

These tight limits apply for the following scenarios:

- When performing load cell type evaluation,

- Before deploying a weighing device for commercial use for the first time,

- Within 30 days of corrective service for any NTEP non-compliance, or

- Within 30 days of an overhaul or reconditioning of the system.

Maintenance Tolerances

Maintenance tolerances apply to equipment in use or field testing. Systems must stay within these bounds regardless of the ambient conditions. Because these conditions can vary widely, maintenance tolerances are generally twice the limits for acceptance tolerances.

Table 3 outlines the basic maintenance tolerance requirements per accuracy class (adapted from NIST Handbook 44, Table 6 [1]). Because NIST enforces additional requirements for unique applications (such as postal scales or axle-load weighers), device owners should consult Handbook 44 for their specific application rules.

This table shows that allowable weigh-system errors scale up in tiers as the load increases. For example, if you have a Class III scale with a scale division \(d\) of 1 lb, the system allows a maximum maintenance error of just 1 lb for any test load between 0 and 500 lbs. If you increase the test load to between 501 and 2,000 lbs, the allowable error budget steps up to 2 lbs.

Table 3: Maintenance Tolerances Per Accuracy Class

| Class | Tolerance in Scale Divisions (d) | |||

|---|---|---|---|---|

| 1 d | 2 d | 3 d | 5 d | |

| I | 0 – 50,000 | 50,001 – 200,000 | 200,001 + | — |

| II | 0 – 5,000 | 5,001 – 20,000 | 20,001 + | — |

| III | 0 – 500 | 501 – 2,000 | 2,001 – 4,000 | 4,001 + |

| IIII | 0 – 50 | 51 – 200 | 201 – 400 | 401 + |

| III L | 0 – 500 | 501 – 1,000 | Add 1 d for each additional 500 d or fraction thereof | |

Individual Error Parameters and Ambient Limits per Accuracy Class

The total scale error described by Table 3 combines several distinct mechanical and environmental factors. NIST isolates these parameters during testing to further prove that the overall system can successfully maintain these tolerances in real-world environments.

Limits on Repeatability Error per Scale Accuracy Class

Repeatability measures a weighing system’s ability to display the same weight value for repeated weighings of the same load.

NIST does not specify how many times a technician must weigh a load within a certain timeframe. Instead, Handbook 44 simply mandates a hard ceiling: successive weighings of the same load under identical conditions must agree within the maintenance tolerances shown in Table 3. If a Class III floor scale allows a 2 lb tolerance for a specific load, the display cannot fluctuate by more than 2 lbs between repeated tests.

Limits on Creep Error per Load Cell Accuracy Class

Creep occurs when a load cell stays under a heavy weight for an extended period, causing its digital output signal to slowly drift over time. If a sensor creeps too much, a static pallet or truck will appear to gain or lose weight the longer it sits on the scale.

NIST evaluates creep during laboratory type testing using the sensor’s maximum recommended load (\(D_{max}\)). Like OIML R60, this load must fall between 90% and 100% of its absolute physical capacity (\(E_{max}\)). Handbook 44 enforces two strict time-dependent limits:

- The 30-Minute Test: When a technician loads a cell to \(D_{max}\) for 30 minutes, the total change between the initial reading and any subsequent reading during the test cannot exceed the absolute Maximum Permissible Error (\(|MPE|\)) calculated from Table 2.

- The 20-to-30 Minute Window: To catch late-stage signal drift, the final reading at the 30-minute mark cannot differ from the reading taken at the 20-minute mark by more than 0.15 times the \(|MPE|\).

Limits on Dead Load Output Return Per Load Cell Class

Dead Load Output Return measures how perfectly a load cell returns to its baseline zero balance after a heavy load is removed. A quality load cell spring element will return to its original shape without “memory” of the large load, rather than show a false-positive reading.

Technicians record the zero-load reading (\(D_{min}\)) immediately before and immediately after running the 30-minute creep test described above. To pass certification, the post-test zero reading cannot differ from the pre-test baseline by more than the following component-level limits:

Class

Recovery Value

II and IIII

\(0.5v\)

III where \(n \leq 4000\)

\(0.5v\)

III where \(n > 4000\)

\(0.83v\)

III L

\(2.5v\)

Temperature Boundaries Where Error Tolerances Must Be Met

NIST, like OIML, has strict performance requirements for ambient temperatures as real-world applications demand accuracy for widely variable conditions. By default, NIST performance tolerances and MPE limits apply across a standard temperature range of -10°C to 40°C (14°F to 104°F) for all accuracy classes.

If a manufacturer designs a weighing system for extreme environments outside this standard window, the customized operating temperature range must span at least the following minimums per class:

Accuracy Class

Class I

Class II

Class III, III L, IIII

Minimum Temperature Span

\(5^{\circ}\)C (\(9^{\circ}\)F)

\(15^{\circ}\)C (\(27^{\circ}\)F)

\(30^{\circ}\)C (\(54^{\circ}\)F)

For Class III devices and higher, NIST requires a built-in safety cutoff. If the ambient air temperature drops below or rises above the scale’s certified operating range, the display screen must automatically shut off or indicate no weight reading. Normal operation can only resume once the hardware’s internal temperature returns to its certified range.

Further Temperature Boundaries: Zero Balance Variation Per Temperature Change

Even when a scale bears no load, extreme temperature swings can cause the internal electronics to drift, resulting in a false weight reading. NIST restricts this component-level drift.

The empty zero balance value for a compliant load cell cannot vary by more than:

- 3 load cell verification intervals (\(3v\)) per 5°C (9°F) temperature change for Class III L sensors.

- 1 load cell verification interval (\(1v\)) per 5°C (9°F) temperature change for all other accuracy classes.

Barometric Pressure Boundaries Where Error Tolerances Apply

Likewise, barometric pressure changes in changing weather affect accuracy.

For all load cell classes except for Class I, the zero indication variance must be one scale division or less for a change in barometric pressure of 1 kPa over the total barometric pressure range of 95 kPa to 105 kPa (28 in to 31 in of Hg).

Scale Functions when Other Environmental Factors Affect Accuracy

NIST Handbook 44 (Section 2) also outlines strict operational cutoffs for radio disturbances, power interruptions, and low power. Outside these limits, the scale must automatically shut off or indicate no reading.

NTEP Certificates of Compliance

Again, the National Type Evaluation Program (NTEP) is responsible for testing measuring equipment and issuing certificates of compliance. A device receives an NTEP certificate when it passes rigorous (sometimes months long) testing against the above requirements at an NTEP-approved laboratory. Typically, a manufacturer seeking to use their product for trade, commerce, law enforcement, or government data collection submits a sample unit to the laboratory. If the equipment fails, it must be resubmitted within 90 days. If a design fails three testing cycles, it will be rejected unless the manufacturer provides acceptable proof that it has corrected the model’s deficiencies.

Compliance Test Equipment Accuracy

NIST specifies the error of the test equipment used to certify measuring systems as equal to or less than either:

- 1/3 the allowed load cell tolerance, or

- 70% of the tolerance of the entire end-to-end measuring system.

Required Labeling for Compliant Devices

To obtain a certificate of compliance, a weighing device must not only pass performance tests; it must also display at least the following markings:

- Manufacturer’s identification

- Model number

- Serial number

- Load cell accuracy class

- Nominal capacity and value of the scale division (\(d\))

- Any special operating temperature range, if outside the \(14^{\circ} – 104^{\circ}\)F default

- Whether rated for single or multiple load cell applications

Active vs Inactive NTEP Compliance

A load cell with active status of NTEP conformance is a device manufactured, sold or deployed having a current certificate of conformance. This means the tested prototype of that load cell has has attained NTEP compliance, and the certification continues to be maintained. A load cell sold with inactive NTEP conformance status is a device that was manufactured under an active certificate of conformance, but the certificate has since expired while the device has been in inventory or in commercial use.

Whole System vs. Component Compliance

Often, laboratories test only components of a measuring system (for example, individual load cell models) rather than the entire system. A system built with certified components is not itself considered compliant unless it as a whole is also tested for compliance. Conversely, if a whole measuring device is certified as compliant, the individual load cell(s) in the system is/are not considered individually certified unless they have been individually tested. Therefore, if its internal load cells are replaced with a different model, a measuring system will no longer be NTEP compliant without passing compliance tests anew.

The component manufacturer is responsible for compliance testing of its component models; likewise the scale manufacturer is responsible for obtaining its system’s certificate of compliance. See our article on legal for trade scales for more information on whole system compliance.

Conclusion

This document has explained at a high level the tolerances and performance requirements imposed by NIST. A certified load cell of a specified class should meet these requirements with 100% certainty with regular calibration. When they do not meet these tolerances, the certified load cell must be taken out of service to be repaired or replaced.

This document also explains how load cell class relates to its divisions of resolution. These divisions help determine the measurement intervals detectable by a weighing system using that particular load cell. For more detailed information on this topic, see our article What Is the Lowest Weight a Load Cell Can Measure.

As always, contact Tacuna Systems with any issues regarding our load cells behaving outside of their specifications.

References

[1]

NIST Handbook 44, Specifications, Tolerances, and Other Technical Requirements for Weighing and Measuring Devices, as adopted by the 104th National Conference on Weights and Measures, 2019, National Institute of Standards and Technology, US Department of Commerce (latest version, 2025 as adopted by the 109th National Conference on Weights and Measures)

[2]

OIML R 60-1, Metrological regulation for load cells Part 1: Metrological and technical requirements, Organisation Internationale de Métrologie Légale, Edition 2017 (latest version 22 November 2021)

[3]

R 60 OIML-CS rev.2 Additional requirements from the United States Accuracy class III L, Organisation Internationale de Métrologie Légale, January 2018